|

The "UTF-8 without BOM" files don't have any header bytes.From what I can tell, Notepad++ describes them as "UCS-2" since it doesn't support certain facets of UTF-16.

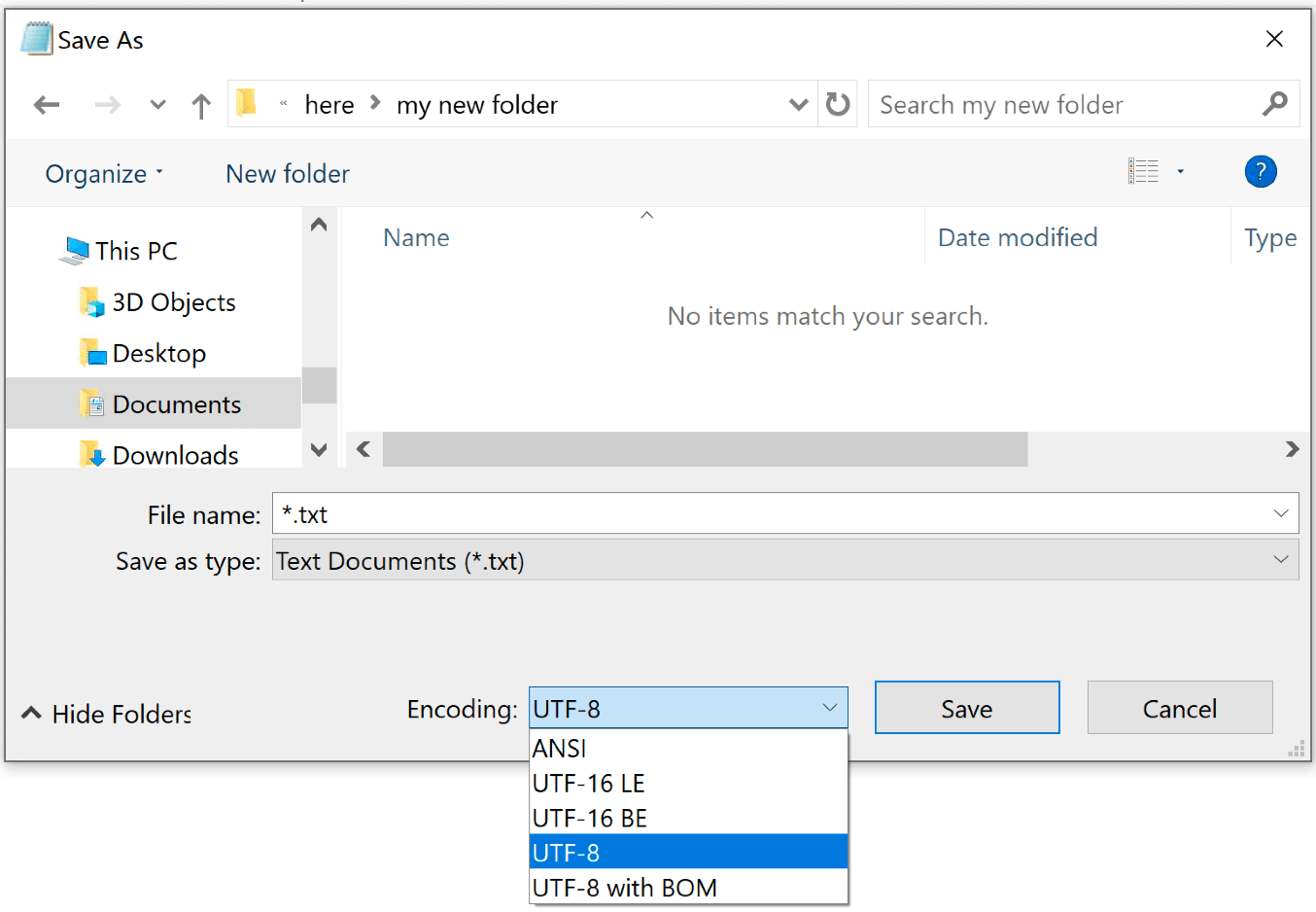

The "UCS-2 Little Endian" files are UTF-16 files (based on what I understand from the info here) so probably start with 0xFF,0xFE as the first 2 bytes.And it appears that some users in Windows, using Notepad++ to write their scripts, dont default to UTF-8 but most often ANSI. Sometimes it does get it wrong though - that's why that 'Encoding' menu is there, so you can override its best guess. Typically, I use readLines () mytextcontent <- readLines (con 'path\to\file.txt') The distant app has problem to deal with non UTF-8 text encoding. Notepad++ does its best to guess what encoding a file is using, and most of the time it gets it right. Or it might be a different file type entirely. However, it might be an ISO-8859-1 file which happens to start with the characters . However, even reading the header you can never be sure what encoding a file is really using.įor example, a file with the first three bytes 0圎F,0xBB,0xBF is probably a UTF-8 encoded file.

Files generally indicate their encoding with a file header.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed